Tell GPT It Can Scrape My -

Publicly shared content which I approved beforehand

Howdy, Daniel here with another digest 💌

If you’ve read any tech news or consumed a social media recently, you’ve probably seen people get angry at (Not CrowdStrike) yet another AI tool/company.

Artists, writers, software developers, and anyone else who creates original content is freaking out, and rightfully so, about how AI is using their data. More specifically, how crawlers gather data to be used for training AI models in a way that constantly results in the unauthorized use of personal or proprietary information.

And it’s not just about creativity or hard work; we all know that if you search the internet hard enough, you can find sensitive information that really shouldn’t be ingested in bulk by your friendly neighborhood AI bot. It also doesn't help that ChatGPT tends to simply “hallucinate” false information about individuals. Combining this wonderful trait with openly available data, as abundant or sparse as it may be, can have devastating results and the potential violation of all the big four letter acronyms - GDPR, CCPA, HIPAA, you name it (HIPAA is 5 letters ((I don’t care))).

The battle over these issues is all the rage as of late - Cloudflare has already declared war with Microsoft, Google, and OpenAI's bots, with tools to block all crawler services, while Adobe had to issue apologies and clarifications following the crossfire of intellectual property lawsuits after announcing new terms of service that many users understood as forcing them to grant unlimited access to their work for purposes of training Adobe’s generative AI.

The thing is, not every AI bot out there is evil and is used to generate the most mediocre content you've ever laid your eyes on (If you want to fight me on this, next Substack is going to be GPT generated, start with “In the modern era of the internet”, and include the word “Delve” 62 times).

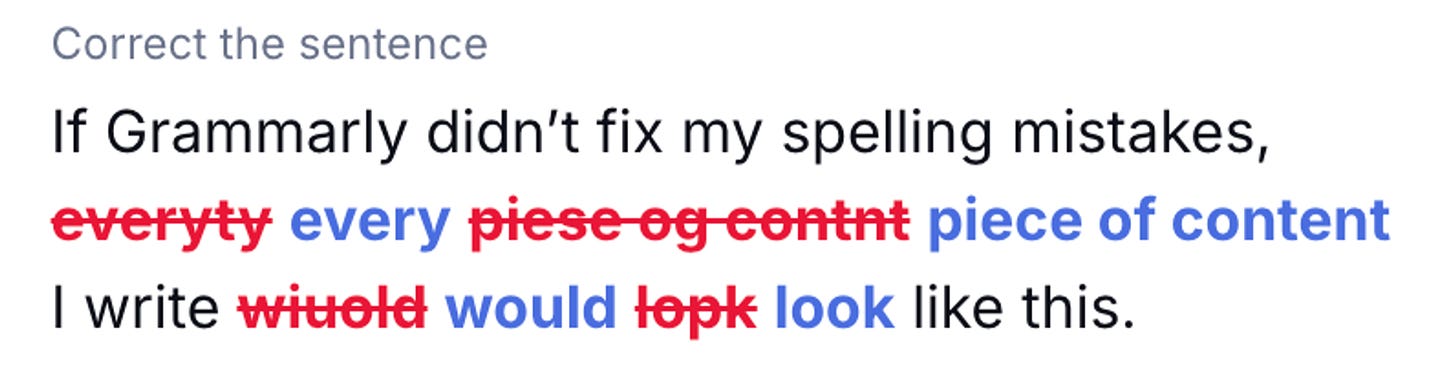

If Grammarly didn’t fix my spelling mistakes, everyty piese og contnt I write wiuold lopk like this. Google’s search indexing bots help our content appear in Google’s search results, which is kinda how people find out it exists.

So we can’t shun the whole concept entirely. But one thing’s for sure - as developers, we need an effective way to monitor and manage bots' access to our applications and provide our users with an experience that safeguards their data in our applications.

What can we do about it?

I’m here to talk about this wonderful button I saw right here in my Substack settings:

Now, in the specific case of this newsletter, I don’t care if GPT tries to replicate my writing style and steal my memes.

But the fact that I have the option to block AI from training on my original content should be the de-facto standard for every application out there. At least, that’s what I believe. And I can tell you how you can make that happen without writing a bunch of new complex features from scratch or generating code with ChatGPT.

Also, we can expand it beyond “Tools which respect this setting” and allow our users to decide who can access what.

But how, Daniel??

Hear me out - three steps:

We identify and rank our users to distinguish between humans, beneficial bots, and those with potential malicious intentions.

We classify user data based on its sensitivity and apply Fine-Grained Authorization (FGA) controls.

We give users direct control over which services can access their data through easy-to-use interfaces.

To do that, we’ll need two tools: ArcJet for ranking bots and Permit.io for FGA and embeddable access control interfaces.

Step 1: Who’s trying to access my app?

Let’s start with the basics: who (or what) is trying to access your app? That, as I’ve covered in a previous post here, can be a pretty complex task on its own. With bots in the mix, it gets even more complicated. Traditionally, you’d rely on your authentication layer to tell you who your users are. But with hybrid identities like automated systems or bots that interact with our application programmatically, and can easily use a non-native method to authenticate, trusting a binary answer from our authentication service is no longer valid.

So, what do you do? You rank them. More specifically, you can use ArcJet’s user ranking system, which provides ranks with ranks for these identities, giving us a more in-depth answer to our “Who” question.

Here’s an example of an ArcJet-based bot rating system:

NOT_ANALYZED: Request analysis failed; might lack sufficient data. Score: 0.

AUTOMATED: Confirmed bot-originated request. Score: 1.

LIKELY_AUTOMATED: Possible bot activity with varying certainty, scored 2-29.

LIKELY_NOT_A_BOT: Likely human-originated request, scored 30-99.

VERIFIED_BOT: Confirmed beneficial bot. Score: 100.

Each rank level also has a detailed score, allowing us to fine-tune the levels for configuration.

By ranking user activity in real-time, we establish the first aspect required to answer the question of “Who is accessing the data?” when determining “What can they do?”

This means we can now distinguish between different types of bots and human interactions, taking one step further in protecting user data from being unintentionally or maliciously used for AI training.

Step 2: What data should they be able to access?

Once you’ve ranked your users (Humans, Bots, Zuckerbergs), the next step is to classify the data they’re trying to access. Not all data is created equal—some is more sensitive than other and needs better protection.

This is where Fine-Grained Authorization (FGA) comes in, with an answer to the question: “Who can do what inside our application, and under what circumstances?”

To implement FGA, you need to consider both user attributes (like the rankings we just talked about) and resource attributes (the sensitivity of the data).

Creating access control rules (policies) like “An AI crawler bot can only have read access to documents marked as public” is a classic use case for Attribute-Based Access Control (ABAC).

This, as you might know, as an avid, dedicated, die-hard reader of this Substack, is something you can do without writing any policy code from scratch if you use Permit.io’s no-code policy editor. With Permit, you can define ABAC User Sets (combinations of users and their attributes) and Resource Sets (combinations of data and its attributes). This way, you can set up strict rules about which users get access to which types of data.

A detailed guide for this exact use case is in the works! So be sure to check it out next week in our blog. I’ll be posting an update once its ready in our Slack Community - so join that if you haven’t already.

Step 3: Allow your users to make the decisions themselves

In ye olden days, before the internet, we used to talk about Mandatory Access Control (MAC) and Discretionary Access Control (MAC). Access control decisions were either limited directly to software developers or system administrators with MAC, or you could let your users have direct influence over access control decisions regarding their own data with DAC.

While these are great overall strategies, they don’t make for an access control layer. The combination of these two strategies is where it’s at - MAC can be used to enforce strict access control for critical application components, while DAC can be employed in areas where you need user flexibility and control.

These days, when we are actually planning an access control layer, it makes much more sense to talk about Fine Grained Authorization based models. When we combine FGA with DAC and MAC, we get a complex access control model that is restricted in critical places and user-friendly within secure boundaries where that makes sense.

In this context, using something like Permit.io’s Elements feature, with its set of prebuilt, embeddable UI components, allows your users to manage their own permissions. These allow you, as a developer, to put the control over these access control functionalities into the hands of your users (Who, as I mentioned previously, really want it). They want to give a specific bot access to their content? No problem. They need to request permission to view something? Done and done.

It’s not some groundbreaking idea (You saw the Substack button), but the fact you can give your users this control without coding it from scratch, is, you know - good.

AI isn’t going away, and neither are the data privacy challenges that come with it. But by ranking your bots, classifying your data, and giving your users control of who can access their data and what they can do with it is a good start.

That’s it for this week -

Adios,

Daniel 🌸